📌 AI-powered brain implant, IBM and NASA Open Source AI Model, Robotics Transformer 2, Audiocraft by Meta, PhotoGuard and more

Greetings and welcome to this week's AI Brews for a concise roundup of the week's major developments in AI.

In today’s issue (Issue #26):

AI Pulse: Weekly News & Insights at a Glance

AI Toolbox: Product Picks of the Week

AI Skillset: Learn & Build

🗞️🗞️ AI Pulse: Weekly News & Insights at a Glance

🔥 News

In an innovative clinical trial, researchers at Feinstein Institutes successfully implanted a microchip in a paralyzed man's brain and developed AI algorithms to re-establish the connection between his brain and body. This neural bypass restored movement and sensations in his hand, arm, and wrist, marking the first electronic reconnection of a paralyzed individual's brain, body, and spinal cord [Details].

IBM's watsonx.ai geospatial foundation model – built from NASA's satellite data – will be openly available on Hugging Face. It will be the largest geospatial foundation model on Hugging Face and the first-ever open-source AI foundation model built in collaboration with NASA [Details].

Google DeepMind introduced RT-2 - Robotics Transformer 2 - a first-of-its-kind vision-language-action (VLA) model that can directly output robotic actions. Just like language models are trained on text from the web to learn general ideas and concepts, RT-2 transfers knowledge from web data to inform robot behavior [Details]

Meta AI released Audiocraft, an open-source framework to generate high-quality, realistic audio and music from text-based user inputs. AudioCraft consists of three models: MusicGen, AudioGen, and EnCodec. [Details | GitHub].

ElevenLabs now offers its previously enterprise-exclusive Professional Voice Cloning model to all users at the Creator plan level and above. Users can create a digital clone of their voice, which can also speak all languages supported by Eleven Multilingual v1 [Details].

Researchers from MIT have developed PhotoGuard, a technique that prevents unauthorized image manipulation by large diffusion models [Details].

Researchers from CMU show that it is possible to automatically construct adversarial attacks on both open and closed-source LLMs - specifically chosen sequences of characters that, when appended to a user query, will cause the system to obey user commands even if it produces harmful content [Paper]

Together AI extends Meta’s LLaMA-2-7B from 4K tokens to 32K long context and released LLaMA-2-7B-32K. [Details | Hugging Face].

AI investment can approach $200 billion globally by 2025 as per the report from Goldman Sachs [Details].

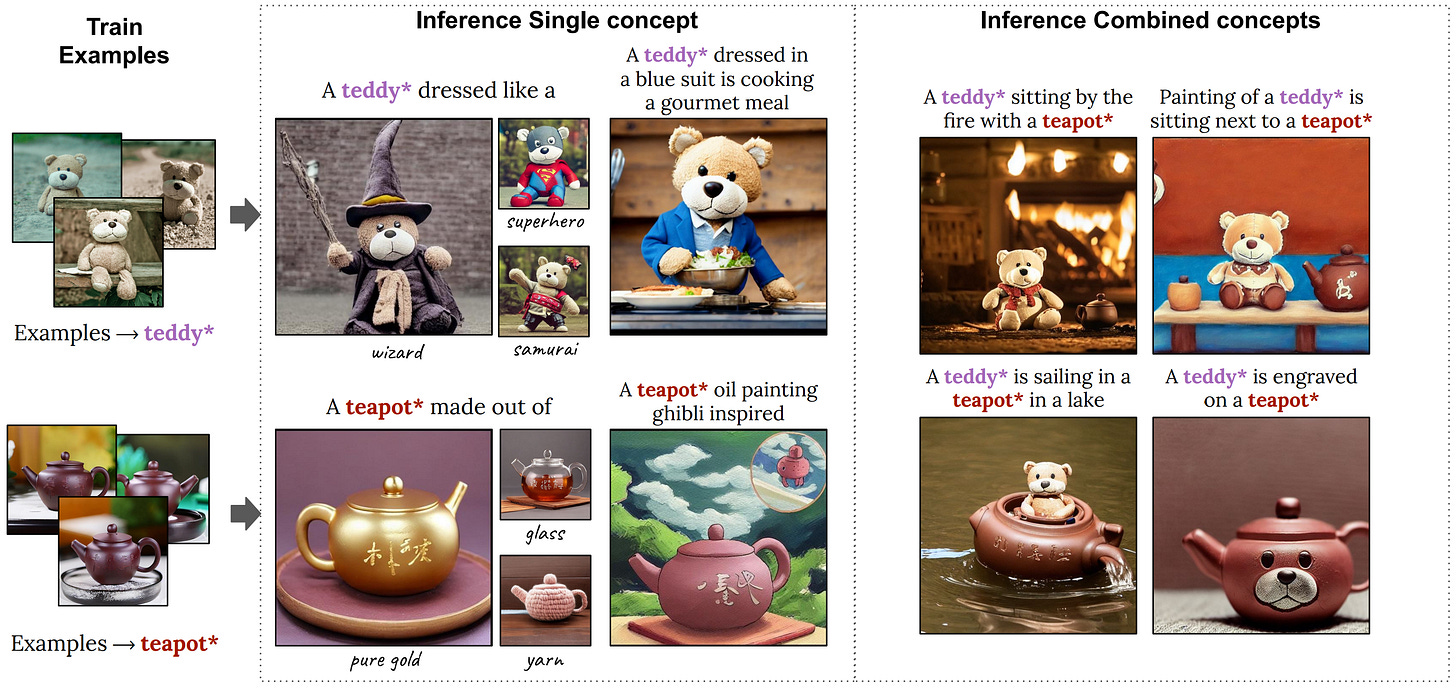

Nvidia presents a new method, Perfusion, that personalizes text-to-image creation using a small 100KB model. Trained for just 4 minutes, it creatively modifies objects' appearance while keeping their identity through a unique "Key-Locking" technique [Details].

Perplexity AI, the GPT-4 powered interactive search assistant, released a beta feature allowing users to upload and ask questions from documents, code, or research papers [Link].

Meta’s LlaMA-2 Chat 70B model outperforms ChatGPT on AlpacaEval leaderboard [Link].

Researchers from LightOn released Alfred-40B-0723, a new open-source Language Model (LLM) based on Falcon-40B aimed at reliably integrating generative AI into business workflows as an AI co-pilot [Details].

The Open Source Initiative (OSI) accuses Meta of misusing the term "open source" and says that the license of LLaMa models such as LLaMa 2 does not meet the terms of the open source definition [Details]

Google has updated its AI-powered Search experience (SGE) to include images and videos in AI-generated overviews, along with enhancing search speeds for quicker results [Details].

YouTube is testing AI-generated video summaries, currently appearing on watch and search pages for a select number of English-language videos [Details]

Meta is reportedly preparing to release AI-powered chatbots with different personas as early as next month [Details]

🔦 Weekly Spotlight

The state of AI in 2023: Generative AI’s breakout year: latest annual McKinsey Global Survey [Link].

Winners from Anthropic’s #BuildwithClaude hackathon last week [Link].

Open-source project Ollama: Get up and running with large language models, locally [Link].

Cybercriminals train AI chatbots for phishing, malware attacks [Link].

🔍 🛠️ AI Toolbox: Product Picks of the Week

MyMap: Map out ideas with AI Copilot. An AI-native app that streamlines the idea curation flow, from brainstorming and organizing to presenting.

AngelList Relay: an AI-powered portfolio analyzer that automatically extracts information as structured data from email correspondences.

Hireguide: Hireguide uses AI and hiring science to help teams create structured interviews that screen for skill and automate interview notetaking

📕 📚 AI Skillset: Learn & Build

Part 1 of a five-part course by Wharton School on YouTube that provides an overview of AI large language models for educators and students [Link].

Guide on finetuning Llama 2 in your own cloud environment, via 100% open-source tool [Link].

Practical data considerations for building production-ready LLM applications — Google slides by Jerry Liu, LlamaIndex co-founder/CEO [Link].

Securing LLM systems against prompt injection - a guide by Nvidia [Link].

✨ ✨ If you find value in AI Brews, you can support via our Patreon page. Thanks for reading and have a nice weekend! 🎉 Mariam.

Hey Mariam, great roundup of AI developments. I'm particularly excited about the AI-powered brain implant and Meta's Audiocraft. The future of AI is looking brighter every day. Keep up the fantastic work.