Qwen-Image-Edit, Mirage 2, Runway Game Worlds Beta, Seed-OSS, DeepSeek-V3.1, Agents.md, Nemotron Nano 2 and more

Qwen-Image-Edit, Mirage 2, Runway Game Worlds Beta, Seed-OSS, DeepSeek-V3.1, Agents.md, Nemotron Nano 2 and more

Hey there! Welcome back to AI Brews - a concise roundup of this week's major developments in AI.

In today’s issue (Issue #110):

AI Pulse: Weekly News at a Glance

Weekly Spotlight: Noteworthy Reads and Open-source Projects

AI Toolbox: Product Picks of the Week

From our sponsors:

Make sense of what matters in AI in minutes —minus the clutter

There’s so much AI hype going around, it’s overwhelming to keep up.

… And that’s assuming you’re following the stuff that actually matters.

Fortunately, there's The Deep View, a newsletter that sifts through all the AI-related noise for you.

It gives you 5-minute insights on what truly matters right now in AI, and it’s trusted by over 452,000 subscribers, including executives at Microsoft, Scale, and Coinbase.

Sign up now for free and start making smarter decisions in the AI space.

🗞️🗞️ Weekly News at a Glance

Dynamics Lab unveiled Mirage 2, a real-time, general-domain generative world engine that transforms your own images into playable environments that can be shared online. You can also chat with Mirage 2 in real time to alter the game world on the fly [Details | Demo].

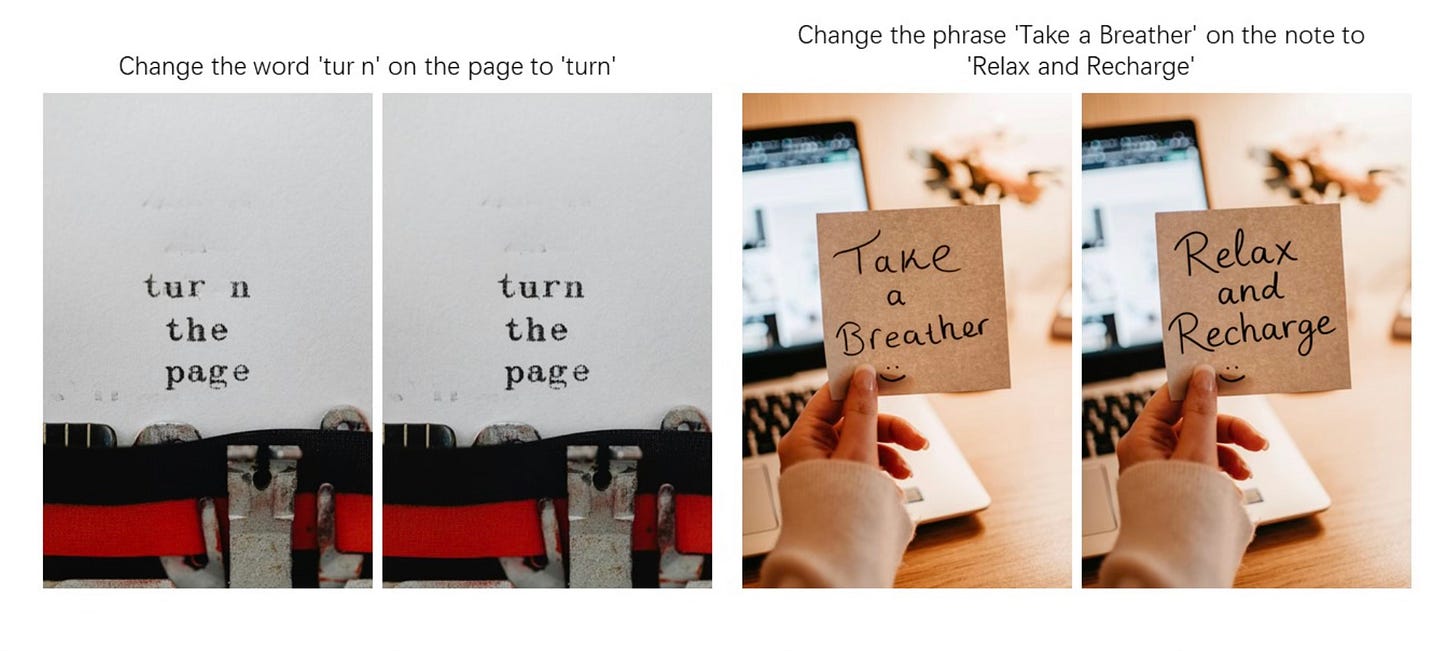

Alibaba Qwen Team released Qwen-Image-Edit, an open-source image editing version of the 20B Qwen-Image model. It supports both low-level visual appearance editing (such as adding, removing, or modifying elements, requiring all other regions of the image to remain completely unchanged) and high-level visual semantic editing (such as IP creation, object rotation, and style transfer, allowing overall pixel changes while maintaining semantic consistency) [Details].

Runway launched Runway Game Worlds Beta. Every game session is generated in real-time with personalized stories, characters and multi-modal media generation. In the beta, you’re able to play from a series of preset text-based games or create games of your own that are private by default, but can be made public for anyone to play or share [Details].

DeepSeek released DeepSeek-V3.1, a hybrid model that supports both thinking mode and non-thinking mode with improved performance in tool usage and agent tasks. DeepSeek-V3.1-Think achieves comparable answer quality to DeepSeek-R1-0528, while responding more quickly [Details].

Google is adding new agentic capabilities and personalized responses to AI Mode in Search, starting with finding restaurant reservations, and expanding soon to local service appointments and event tickets. AI Mode is also now available in 180 new countries in English [Details].

NVIDIA released Nemotron Nano 2 family of accurate and efficient hybrid Mamba-Transformer reasoning models. NVIDIA-Nemotron-Nano-v2-9B achieves comparable or better accuracies on complex reasoning benchmarks than the leading comparably sized open model Qwen3-8B at up to 6x higher throughput [Details].

Anthropic launched three new free AI fluency courses, co-created with educators, to help teachers and students build practical, responsible AI skills [Details].

Bytedance Seed team released Seed-OSS family of open-source LLMs with up-to-512K long context natively. The release includes Seed-OSS-36B-Base (both with and without synthetic data versions) and Seed-OSS-36B-Instruct. Although trained with only 12T tokens, Seed-OSS achieves excellent performance on several popular open benchmarks [Details].

Z.ai released GLM-4.5V, an open-source visual reasoning model that delivers state-of-the-art performance among open-source models in its size class, dominating across 41 benchmarks. GLM-4.5V is based on ZhipuAI’s next-generation flagship text foundation model GLM-4.5-Air (106B parameters, 12B active). It covers common tasks such as image, video, and document understanding, as well as GUI agent operations [Details].

Jan released Jan-v1, a 4B model for web search, an open-source alternative to Perplexity Pro. It delivers 91% SimpleQA accuracy, slightly outperforming Perplexity Pro while running fully locally. Jan-v1 uses the Qwen3-4B-thinking model to provide enhanced reasoning capabilities and tool utilization [Details].

ByteDance Seed introduced M3-Agent, an open-source multimodal agent framework equipped with long-term memory that can process real-time visual and auditory inputs to build and update its long-term memory [Details].

OpenAI launched a sub-$5 ChatGPT plan in India [Details].

🔦 🔍 Weekly Spotlight

Articles/Courses/Videos:

Open-Source Projects:

Agents.md: A simple, open format for guiding coding agents, used by over 20k open-source projects

LIA-X: a interpretable portrait animator designed to transfer facial dynamics from a driving video to a source portrait with fine-grained control

MemU: an open-source memory framework for AI companions

🔍 🛠️ Product Picks of the Week

Parallel: A web API purpose-built for AIs; State of the art across several benchmarks.

Genspark AI Developer: the L4 autonomous AI coding agent that enables everyone to build without coding skills - video.

Grammarly’s AI Agents: AI Grader that gives feedback aligned to your rubric and course info, auto-generate citations as you write, check your writing for AI-generated content and more.

Qoder: Agentic Coding Platform. Free access during preview

Last Issue

GPT‑5, Qwen-Image, Skywork UniPic, Genie 3, Grok Imagine, Gemini Storybooks and guided learning, Opus 4.1, gpt-oss-120b and gpt-oss-20b, Eleven Music, Seed Diffusion Preview, Lindy 3.0 and more

Hey there! Welcome back to AI Brews - a concise roundup of this week's major developments in AI.

Thanks for reading and have a nice weekend! 🎉 Mariam.